Sector-wide digital transformations are redefining how business is traditionally done. AI adoption has characterized this shift, with computers now able to emulate human-level cognitive actions independently. AI can be leveraged to strengthen productivity, recognize patterns in data, predict outcomes, and make decisions, to name a few.

However, conversations about AI safety become non-negotiable when considering the many threats associated with AI.

AI risk management is essential for reducing bias and hallucinations in outputs and protecting data privacy and security. Without careful oversight, the challenges can outweigh the benefits.

This article explores AI safety. We’ll uncover common risks, identify those responsible for ensuring it, and explain why it’s more important than ever in the modern business environment.

- What is AI safety?

- Why is AI safety important?

- What is the difference between AI safety Vs. AI security?

- Who is responsible for AI safety in organizations?

- What are the most common types of AI risks?

- What are the best practices for strengthening AI safety?

- Why will AI safety always be needed?

- People Also Ask

What is AI safety?

AI safety is a set of mechanisms, practices, and principles that ensure that AI technologies are developed with humanity’s benefit in mind and without resulting in unintended harm or negative consequences.

It involves examining the significant risks AI poses and implementing processes to mitigate them. This ensures that AI solutions remain within developers’ intended design parameters and comply with the appropriate laws and regulations.

Those developing and deploying AI adhere to various operational measures, principles, and philosophies that facilitate this.

Removing bias in training data and flawed reasoning in algorithms is just as important as setting up measures for data privacy and security. Evaluating system vulnerabilities to threats and defining terms of use through AI compliance and AI ethics are also essential.

AI safety is important in developing AI and ensuring it works safely and fairly. It focuses on fixing biases, security risks, and ethical issues to ensure that AI follows the law and has a positive effect on society.

Why is AI safety important?

Modern enterprises are fast-tracking innovation through a host of digital tools and tech solutions, with AI gaining the most traction. However, without proper safety measures to govern and manage its use, AI can pose significant risks to security, ethics, and fairness.

AI safety helps prevent harmful consequences from using AI incorrectly. Without suitable safety measures, AI systems can make biased decisions, invade privacy, or even be used for harmful purposes.

According to the Emerging Technology Observatory, AI safety research is growing rapidly but still represents a small portion of the broader field. While progress is being made, more focus and investment are needed to address safety challenges as AI technologies evolve and expand.

We live in a time when it’s more important than ever for businesses to focus on AI safety and ensure their tools work fairly, securely, and responsibly.

Businesses need explicit rules to manage AI risks and should update them as technology changes. They must also teach employees about the ethical side of AI and ensure that AI decisions are easy to understand. This helps companies build trustworthy systems, protect their reputations, and avoid legal problems.

What is the difference between AI safety Vs. AI security?

AI safety and AI security are both important aspects of ensuring AI systems work properly and responsibly.

While they are related, they focus on different areas of protection. Let’s take a closer look at the difference between the two:

AI safety

AI safety concerns designing AI systems to prevent harmful outcomes. This includes avoiding biased decisions, ensuring fairness, and guaranteeing that AI doesn’t cause unintended harm. It also focuses on ethical concerns, like ensuring that AI respects privacy and operates transparently. AI safety helps create systems that benefit everyone while reducing risks like discrimination or harm.

AI security

AI security, on the other hand, focuses on protecting AI systems from outside threats. This includes keeping them safe from hackers or malicious attacks that could cause unpredictable behavior or steal sensitive information. AI security safeguards AI tools and protects personal and business data.

Both AI safety and security are essential for creating trustworthy and reliable AI systems that people can rely on.

Who is responsible for AI safety in organizations?

AI safety requires a holistic approach that involves multiple stakeholders across all business verticals. Creating safe AI demands a collaborative effort affecting C-suite executives, data scientists, AI developers, employees, and policymakers.

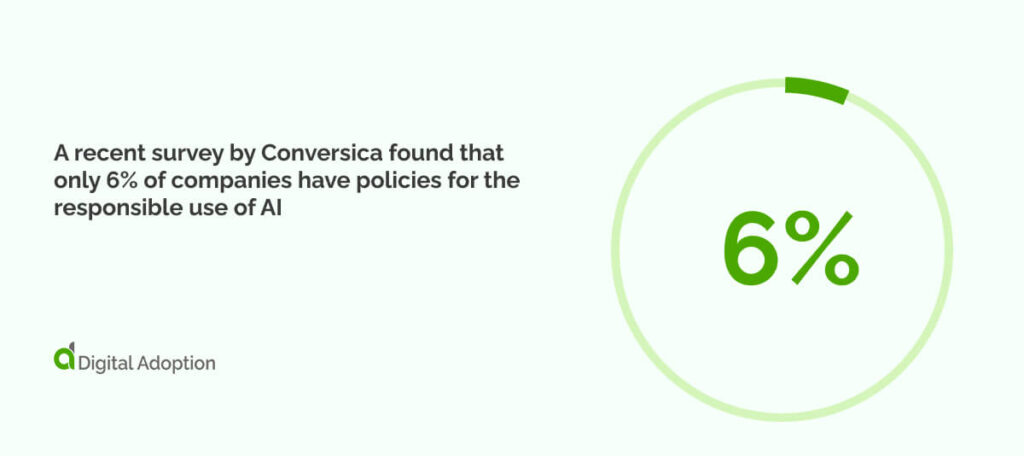

A recent survey by Conversica found that only 6% of companies have policies for the responsible use of AI, despite 73% recognizing their importance. This highlights a significant gap in assigning clear responsibility for AI governance within organizations.

With that in mind, let’s take a look at who is responsible for AI safety in more detail:

AI data scientists and developers

AI data scientists and developers design and build AI systems and ensure their security. They test for vulnerabilities, fix security issues, and improve AI business models. Their job is to ensure that AI is safe, works properly, and protects people’s data so it doesn’t cause harm or errors.

Tech industry leaders

Tech industry leaders, such as chief technology officers (CTOs), guide companies in creating secure and reliable AI systems. They set policies and practices for AI safety within their organizations and collaborate with other experts to anticipate security threats. Thus, they ensure that the AI tools they create are safe for users and society.

Government bodies and regulatory agencies

Government bodies and regulatory agencies create rules that control how AI is developed and used, especially in the context of government digital transformation. They set guidelines to protect privacy, ensure fairness, and prevent harm. These organizations monitor AI practices, enforce laws, and provide companies with regulations to keep AI systems secure and safe for everyone.

Nonprofit organizations (NGOs) and advocacy groups

Nonprofit organizations and advocacy groups work to protect the public from the risks of AI. They focus on promoting fairness, privacy, and transparency in AI systems. These groups push for stronger regulations, raise awareness of AI issues, and help ensure that AI technologies benefit society without causing undue harm.

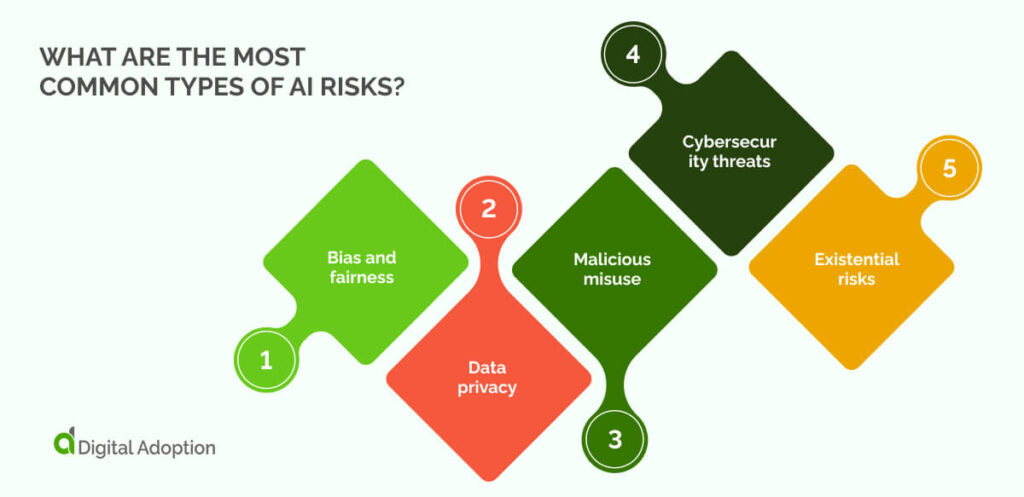

What are the most common types of AI risks?

Now that you know who is responsible for AI safety, the next step is to explore the most common types of AI safety risks. Understanding these risks will help you take a proactive approach to managing them effectively.

Let’s take a closer look at the most common types of AI risks:

Bias and fairness

Bias in AI occurs when algorithms make decisions based on incomplete or unfair data, leading to unjust outcomes. These could impact people’s lives, such as job hiring or loan approvals. Ensuring fairness means training AI models on diverse, unbiased data and constantly checking for patterns that favor one group over others.

Data privacy

Data privacy is about protecting personal information from being misused or exposed. AI systems often rely on large datasets, which can include sensitive details. If not properly handled, this data can be leaked or used inappropriately, causing harm to individuals. Ensuring strong privacy protection is key to keeping users safe.

Malicious misuse

Bad actors can misuse AI for harmful purposes. This includes creating deepfakes, automating cyberattacks, or spreading disinformation. Malicious misuse can cause significant damage to individuals, businesses, and even governments. Strict guidelines and strong security measures must be implemented to prevent this when deploying AI systems.

Cybersecurity threats

AI systems are vulnerable to cyberattacks, in which hackers try to manipulate or control AI models. These attacks can lead to incorrect decisions or expose private data. Therefore, it is crucial to ensure robust security, constant monitoring, and rapid responses to vulnerabilities to protect AI systems from cybersecurity threats.

Existential risks

Existential risks refer to the long-term dangers AI might pose to humanity if it becomes too advanced or uncontrollable. This includes the possibility of AI systems acting in ways that harm humans or disrupt societies. Proper safety measures and ethical considerations are essential to prevent unintended consequences that could endanger the future.

What are the best practices for strengthening AI safety?

Understanding risk is one thing, but knowing best practices is the next step in proactively managing and mitigating those risks.

Let’s take a closer look at the best practices for strengthening AI safety:

Detect and limit algorithmic bias

Spotting and limiting bias in AI systems is crucial for strengthening AI security. Bias can lead to unfair decisions, such as hiring or lending. Regularly checking AI models for biased results and making necessary adjustments ensures that AI works fairly for everyone and doesn’t harm certain groups.

Ensure transparency and explainability in AI

AI systems should be easy to understand. When making decisions, AI should explain how and why those choices are made. Making AI more transparent helps users trust it. When something goes wrong, finding and fixing problems is easier, improving overall security and fairness.

Establish data security protocols

Data security protocols help protect important and private information from theft or misuse. Passwords, encryption, and access limits keep data safe. Strong security also helps prevent AI systems from being hacked or leaking sensitive information, ensuring AI works correctly and protecting users’ privacy.

Develop ethical AI frameworks

Creating ethical AI frameworks means setting rules to ensure that AI is developed fairly and responsibly. When building AI, these guidelines help developers consider privacy, fairness, and safety. Ethical frameworks ensure that AI technology benefits people and doesn’t harm them, promoting trust and safety.

Implement AI governance frameworks

AI governance frameworks set clear rules for how AI is developed and used. They guide companies to ensure systems are safe, ethical, and legal. These frameworks help prevent risks like bias or security issues and promote responsible, transparent use of AI, building trust with users and regulators.

Why will AI safety always be needed?

As AI becomes more common, it’s now seen as a digital adoption challenge for businesses to manage its use properly.

Companies must ensure they use AI ethically and securely across all their systems.

AI safety will always be important because its impact grows as AI becomes part of everyday life. While AI can change industries for the better, it also comes with risks, like unexpected problems, ethical issues, and security threats. These risks will evolve as technology advances, so safety measures will always be necessary.

AI will keep learning and growing, which means the chance of harm increases if it’s not carefully monitored. Developers, businesses, and regulators must stay alert to ensure AI’s power doesn’t outpace the rules meant to protect us.

Safety rules and ethical standards must be updated regularly to prevent AI bias or misuse. As AI becomes a bigger part of our lives, it’s essential to continue working on safety to ensure it helps society without harming people.

People Also Ask

-

Which emerging technologies are influencing the future of AI safety?New technologies like blockchain, quantum computing, and machine learning are making AI safer. Blockchain helps make AI systems more transparent, so its easier to see how they work. Quantum computing makes AI systems more secure. Better algorithms are being developed to reduce mistakes and bias, which will make AI systems safer, more reliable, and trustworthy in the future.

-

What are the typical compliance risks related to AI?AI compliance risks include breaking data privacy laws, like GDPR, or using biased data that leads to unfair results. AI systems might also fail to meet rules or ethical standards, which could cause legal problems, damage a company’s reputation, and hurt AI users.

-

Is AI a security risk?AI can be a security risk if not properly managed. It can be hacked or used for harmful purposes, like spreading misinformation or stealing data. However, with proper safety measures, AI can be secure and help protect systems, making it less likely to be a threat.